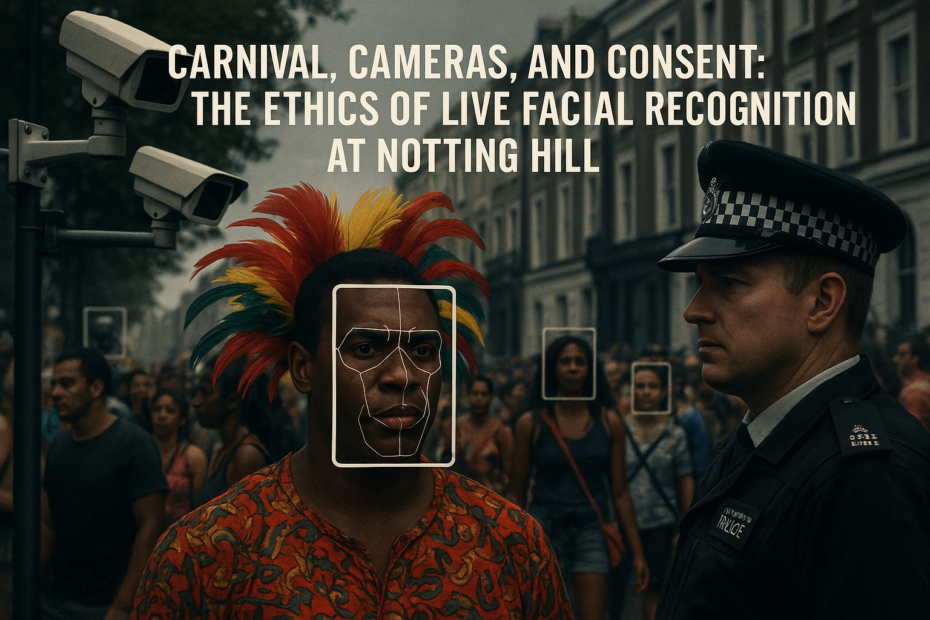

Practically prompted #4: Carnival, Cameras, and Consent: The Ethics of Live Facial Recognition at Notting Hill

This is the fourth in a trial blog series called “Practically Prompted” – an experiment in using large language models to independently select a recent, ethically rich news story and then write a Practical Ethics blog-style post about it. The text below is the model’s work, followed by some light human commentary. See this post for the… Read More »Practically prompted #4: Carnival, Cameras, and Consent: The Ethics of Live Facial Recognition at Notting Hill